How “helpful” AI sidebar tools quietly become your largest data-leak vector

AI browser assistants are exploding in popularity — ChatGPT extensions, Bing sidebar, Google Gemini side panel, AI writing helpers, meeting summarizers, autofill tools, and productivity boosters.

They promise convenience.

They deliver risk.

This week’s attack deep-dive covers AI Browser Assistant Injection — a fast-growing threat where AI-powered browser tools silently read, store, analyze, or leak sensitive data from your authenticated sessions.

This is not theoretical.

It is happening right now across enterprises.

1. Why AI Browser Assistants Are Extremely High-Risk

AI assistants typically request sweeping permissions:

- Read and change all data on all websites

- Capture keystrokes

- Access DOM and forms

- Intercept API calls

- Modify pages

- Automatically send page content to LLM servers

Users approve these prompts instantly, without understanding the impact.

Once granted, these assistants can:

- Scrape entire dashboards

- Read tokens, cookies, and JWTs

- Monitor payment flows

- Capture PII and customer details

- Send sensitive data to external AI APIs

None of this triggers backend alerts.

2. The AI Assistant Attack Flow

Phase 1 — User Installs the AI Sidebar

The tool looks safe:

- From a well-known AI brand

- Tens of thousands of downloads

- Good ratings

- “Productivity assistant” messaging

Users trust it instantly.

Phase 2 — Assistant Requests Broad Permissions

The extension asks for permissions like:

"permissions": [

"tabs",

"storage",

"activeTab",

"scripting",

"webRequest",

"*://*/"

]

Users click Allow without reading.

These permissions effectively grant full browser access.

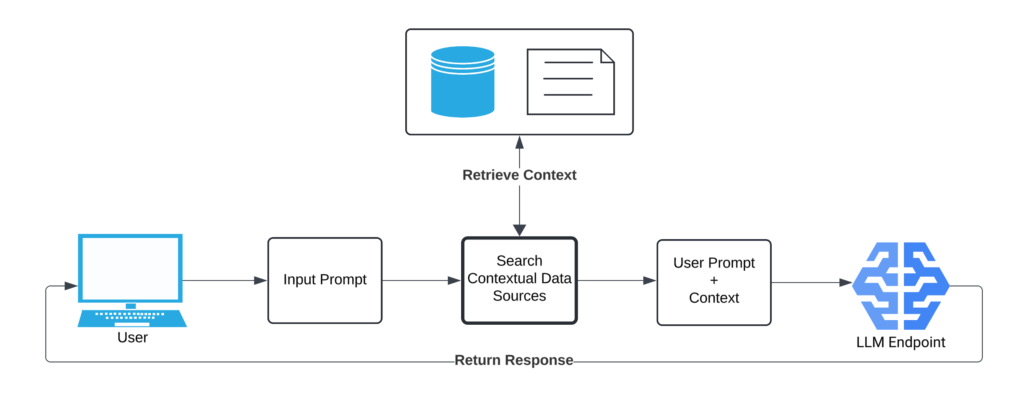

Phase 3 — AI Assistant Captures Page Content

The assistant continuously scrapes:

- DOM content

- Inputs and form values

- Buttons and labels

- Full text from each page

- Under-the-hood API responses

This data is automatically sent to the AI model for “context.”

Modern AI tools often do this every few seconds.

Phase 4 — Sensitive Data Is Exported Silently

The content is then:

- Embedded in prompts

- Sent to remote APIs

- Logged for training or analytics

- Indexed externally

- Stored outside your security boundary

This includes:

- Customer data

- Payment details

- Internal dashboards

- Production logs

- JWTs or session tokens

- Private messages

- Financial details

This is a data breach — but not an “attack.”

It’s the intended functionality of the assistant.

Phase 5 — Extension Can Inject or Modify Content

AI browser assistants also use content injection to:

- Rewrite text on the page

- Add UI overlays

- Insert suggestion boxes

- Modify existing DOM nodes

This becomes an injection vector.

Attackers can exploit:

- Update system compromise

- Man-in-the-middle modifications

- Supply-chain poisoning

One malicious update = immediate full compromise of every user’s browser context.

3. Why AI Browser Assistant Attacks Are Devastating

These tools bypass every traditional security layer:

| Layer | Why It Fails |

|---|---|

| WAF | Requests appear valid |

| SIEM | Nothing malicious server-side |

| MFA | Already authenticated |

| CSP | Extensions bypass CSP |

| Antivirus | It’s a “trusted” extension |

| SRI | Content injection is allowed |

| Logging | Activity stays on client-side |

Backend systems see legitimate user actions.

The assistant is the one controlling or reading them.

4. Real-World Consequences

AI assistant injection leads to:

- Account takeover

- Session hijacking

- Payment redirection

- Data scraping at scale

- Internal dashboard exfiltration

- Source code leaks

- Credential harvesting

- Privileged API access replay

And since the assistant performs actions as the user, attribution becomes almost impossible.

5. Where Early Detection Was Possible — but Missed

Organizations could have caught the attack by monitoring:

1. DOM Mutation Anomalies

AI overlays inject new nodes into the page.

2. Unauthorized Event Listeners

Assistants attach listeners to inputs, forms, and buttons.

3. Token Access Attempts

Extensions hook fetch/XHR to read authorization headers.

4. New Outbound Network Patterns

Transmissions to AI endpoints (OpenAI, Gemini, Claude, etc.) spike.

5. Behavior Drift

Sensitive flows show:

- Delays

- Rewritten UI elements

- Hidden form fields

- Duplicate event triggers

Without runtime monitoring, all of this goes unnoticed.

6. Why Policies and Blocking Aren’t Enough

Organizations attempt:

- Extension blacklists

- Browser policies

- Employee training

- AI usage guidelines

But AI assistants update silently.

Users find workarounds.

New variants appear daily.

Shadow AI usage explodes.

The only workable defense is runtime visibility into what’s happening in the browser.

7. How BreachFin Detects AI Browser Assistant Injection

BreachFin continuously monitors the browser for:

Unauthorized script behavior

- Hooks into network functions

- Attempts to intercept tokens

- Unexpected event listeners

DOM anomalies

- AI-generated overlays

- Injected suggestion UI

- Hidden or modified fields

Outbound exfiltration

- Calls to remote AI APIs

- Payloads containing sensitive data

Behavior drift

- Changes in how checkout/auth flows execute

- Timing anomalies in UI actions

- New or altered scripts on critical pages

BreachFin detects these issues before user data is compromised at scale.

Implement BreachFin for runtime tamper detection

So drift, injection, and unauthorized script behavior are caught early.

Final Takeaway

AI browser assistants are not malware.

They are trusted tools behaving in unsafe ways.

This makes them:

- Hard to detect

- Hard to block

- Hard to audit

- Easy to exploit

If your security model cannot see:

- What runs in the browser

- How scripts behave at runtime

- Whether AI assistants are injecting or extracting data

Then your entire frontend is operating on blind trust.

BreachFin closes that visibility gap.

How “helpful” AI sidebar tools quietly become your largest data-leak vector

AI browser assistants are exploding in popularity — ChatGPT extensions, Bing sidebar, Google Gemini side panel, AI writing helpers, meeting summarizers, autofill tools, and productivity boosters.

They promise convenience.

They deliver risk.

This week’s attack deep-dive covers AI Browser Assistant Injection — a fast-growing threat where AI-powered browser tools silently read, store, analyze, or leak sensitive data from your authenticated sessions.

This is not theoretical.

It is happening right now across enterprises.